The RFP Response Problem: Why Expensive People Are Doing Document Assembly Work

Sam Okpara

December 2025

A 60-page government RFP lands in your inbox. Buried inside are hundreds of specific requirements -- some explicit, some implied. You need to address every one, provide evidence for your claims, match specific staff to specific roles, prove compliance with a dozen regulations, and format everything to the agency's exact specifications. Miss one requirement? Disqualified. Wrong format? Disqualified.

Most companies handle this with Word documents, spreadsheets, and institutional memory ("I think we used Sarah's bio for something similar last year -- check the shared drive"). The result is that smart, expensive people spend four to five days on what is fundamentally a document assembly problem with very high stakes.

For firms competing for contracts with agencies like PANYNJ, NYPD, or NYC DYCD, this is not a minor inconvenience. It is a structural disadvantage. Firms with fifty people have dedicated proposal teams. Firms with five to fifteen people -- which is most of them -- have founders pulling weekend shifts in Google Docs.

If you submit five proposals to win one, and each one takes a week, the math does not work.

Why current approaches fail

The standard RFP response workflow has three compounding problems:

Requirement extraction is error-prone. Government RFPs bury requirements across dozens of pages, appendices, and supplementary documents. A single missed paragraph in Appendix C can disqualify an otherwise strong response after forty hours of work.

Institutional knowledge is fragmented. Past proposals, capability statements, project descriptions, and staff resumes live across shared drives, email threads, and the memories of people who may no longer be at the company. Reconstructing this for each proposal is redundant and slow.

The work does not match the talent. Subject matter experts spend the majority of their time on formatting, cross-referencing, and assembly rather than the strategic and technical thinking that actually wins proposals.

The solution is not "work harder." It is to separate the document assembly problem from the strategic thinking problem and automate the first one.

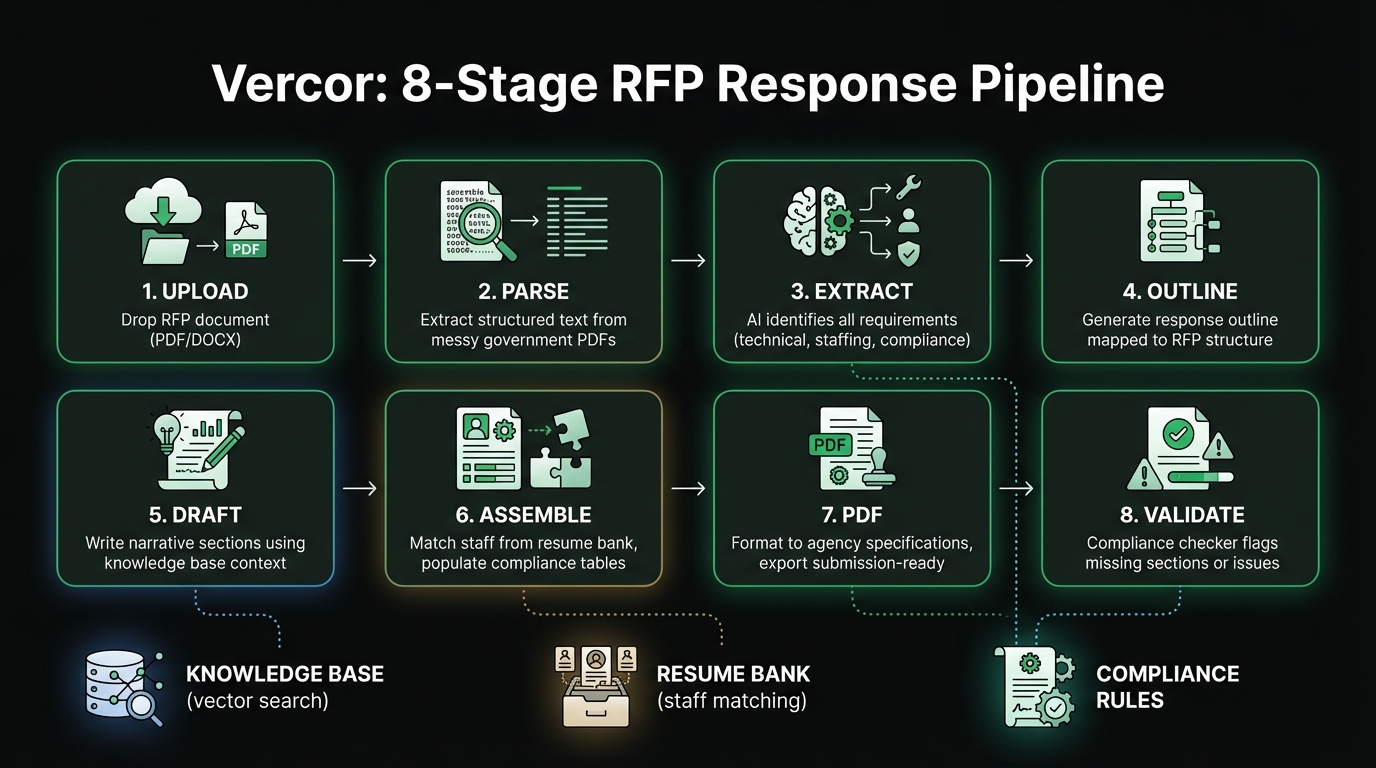

An 8-stage pipeline approach

Vercor is an AI-powered system built to handle the assembly side of RFP responses. It takes an RFP document and produces a draft response -- not a rough outline, but a formatted document with staffing assignments, compliance matrices, and narrative sections ready for human review.

Here is how each stage works:

1. Upload. Drop the RFP document in. PDF, Word, whatever format the agency uses.

2. Parse. Extract structured text from the document. Government PDFs are notoriously messy -- scanned pages, embedded tables, inconsistent formatting. This stage handles the ugly stuff.

3. Extract. Identify every discrete requirement: technical requirements, staffing mandates, compliance criteria, evaluation scoring. Each gets tagged with a category and priority level. This is where catching Appendix C stops depending on human attention span at 2am.

4. Outline. Generate a response outline mapped to the RFP's structure. Mandatory sections first, then scored sections ordered by point value.

5. Draft. Each section gets a narrative draft written to address the specific extracted requirements. The AI draws from the firm's knowledge base -- past proposals, capability statements, project descriptions -- so the language reflects actual experience, not generic filler.

6. Assemble. Staffing assignments are matched from a resume bank. If the RFP requires a "Project Manager with 10+ years of transit experience," the system searches team resumes and recommends the best match. Compliance tables are auto-populated from certification records.

7. Format. The assembled response is formatted to the agency's specifications and exported as a submission-ready PDF. Headers, footers, page numbers, required cover pages -- all handled.

8. Validate. A compliance checker runs through the final document and flags gaps: missing required sections, staff assignments that do not meet minimum qualifications, word count violations, formatting inconsistencies.

The knowledge base is the real differentiator

The drafting stage is only as good as the source material it can draw from. This is why the knowledge base matters more than the model.

Past proposals, project case studies, capability statements, staff resumes, compliance certifications, and company policies are chunked, embedded, and stored in a vector database. When the system drafts a section about transit infrastructure experience, it pulls from the actual project description written two years ago. When it describes a QA process, it references the real methodology document.

Government evaluators can tell the difference between specific, evidence-backed narratives and vague corporate language. The knowledge base is what makes the output the former rather than the latter.

The infrastructure choice matters

Vercor runs on Cloudflare: Workers for orchestration, D1 for structured data, R2 for document storage, and Vectorize for knowledge base search. Gemini handles AI generation.

This stack choice was deliberate. Government compliance teams ask hard questions about data residency, processing locations, and security posture. Cloudflare's infrastructure provides clear answers, which matters when the buyer is a public agency.

What it does not do

Vercor does not write perfect proposals. It writes strong first drafts that a human can review and refine in hours instead of days.

Subject matter experts still need to review technical sections. Someone still needs to verify staffing assignments against actual availability. A final human eye on the compliance matrix is still necessary.

What gets eliminated is the drudge work: initial assembly, requirement cross-referencing, formatting, and the "did we address section 4.2.3(b)?" checking. The stuff that consumes weekends and makes people question whether government contracting is worth the trouble.

Other honest limitations:

- The knowledge base needs meaningful seed content. A firm with no documented past performance will get weaker drafts until the base is built up.

- Highly technical sections (novel engineering approaches, original research) still need to be written by humans. The AI can structure them but cannot invent genuine technical insight.

- Each agency has formatting quirks that sometimes require manual adjustment after export.

The impact on volume

Moving from four to five days of focused work per proposal to roughly one day of review and refinement changes the competitive math. That is the difference between submitting three proposals a month and submitting twelve.

For small firms competing for government contracts, volume matters. You are not going to win every bid. But you cannot win bids you do not submit.

The decision framework for evaluating RFP automation

Not every firm needs an AI-powered RFP pipeline. Here is when it makes sense:

- You respond to 3+ RFPs per month and the assembly time is a bottleneck

- You have documented past performance that can seed a knowledge base

- Your proposals follow repeatable structures (most government RFPs do)

- Your competitive edge is expertise, not formatting -- the automation frees your best people to focus on the parts that actually win

If your firm is spending weekends assembling government proposals and wondering whether there is a better way, Vercor was built for exactly that problem.

Need help building something like this?

At Paramint, we build production AI systems, custom software, and internal tools for growth-stage startups, enterprises, and government agencies. We focus on solutions that deliver measurable impact — not just demos.

Get in touchRelated posts

Solving the Cold Start Problem: Building a Dating Profile Review Marketplace

Dating apps provide zero feedback on why profiles underperform. Building a two-sided marketplace to solve that reveals hard lessons in trust, pricing, and the cold start problem.

February 2026Why CRMs Fail Relationship-Driven Businesses (And What Comes Next)

CRMs store relationship data but leave the hard work to you. Agentic relationship intelligence reads your communication streams, builds a dynamic knowledge graph, and surfaces exactly who needs attention and what to say.

November 2025